|

FOUND SUBJECTS at the Moonspeaker |

|

Agnotology (2018-09-25)

The three wise monkeys at toshu-gu shrine, japan. Photo courtesy of MichaelMaggs under Creative Commons Attribution-Share Alike 2.5 Generic license via wikimedia commons, january 2004.

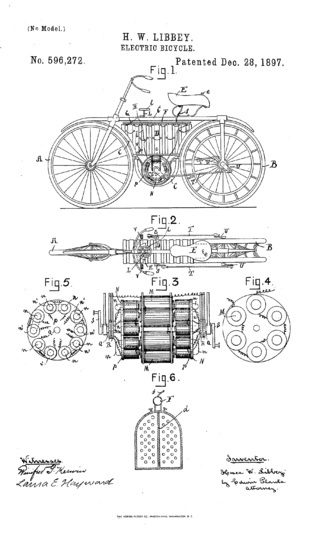

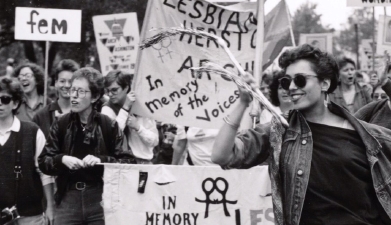

When I started working on this particular thoughtpiece, it was with no expectation of stumbling on a fascinating wikipedia rabbit hole. Forgoing most of the interesting things that popped up, except for this, that the "three wise monkeys" are japanese in origin, designed as an illustration of a confucian maxim. So they are an interesting example of a visual proverb that is often presented with no sign of its actual provenance and appropriated for use to entertain children, among other tasks it may not have been related to to begin with. They are also a great example of an idea, its transmission, and reception that could be studied by "agnotologists" insofar as anyone dares call themselves that. My first encounter with "agnotology," which according to the admirably concise wikipedia definition is "the study of culturally induced ignorance or doubt, particularly the publication of inaccurate or misleading scientific data." The article states the term was invented in 1995, and in many ways it corresponds to what I have written about in the two part essay For Heaven's Sake Don't Tell Them Anything, referring to it there as "anti-information" (part one | part two). I suppose agnotology has the benefit of not implying to those familiar with matter and antimatter that anti-information must destroy information. But I have to admit being caught between a sense of "this is bullshit" and "this could be useful." Useful because the aspect that people who study "agnotology" are trying to forefront is inaccurate or absent information that is culturally constructed. And it is important, critical even, to face the fact that ignorance can be imposed or actively chosen. Actively chosen ignorance is not a comfortable topic. Not that this is exactly where the author was going in the article where I learned about this field. To the contrary, George W. Colpitts was busy explaining the differences between fur trader and tourist Peter Fidler's company and personal journals. With no respect to Colpitts intended, I have to admit that this was the example that made me feel like calling bullshit on the whole thing. An exaggerated response, and obviously not too serious of one. Still, worth unpacking. UPDATE 2018-07-26 - Mikhail Bakhtin spoke about the changing nature of what we read over time in a broader sense on page 4 of his "Response to a Question from Novy Mir," in Speech Genres and Other Late Essays, University of Texas, Austin 1986. "It seems paradoxical that... great works continue to live in the distant future. In the process of their posthumous life they are enriched with new meanings, new significance; it is as though these works outgrow what they were in the epoch of their creation. We can say that neither Shakespeare himself nor his contemporaries knew the 'great Shakespeare' whom we know now... But do we then attribute to Shakespeare's works something that was not there, do we modernize and distort them? Modernization and distortion, of course, have existed and will continue to exist. But that is not the reason why Shakespeare has grown. He has grown because of that which actually has been and continues to be found in his works, but which neither he himself nor his contemporaries could consciously perceive and evaluate in the context and culture of their epic." You could describe my reaction as having several different interfolded layers. One fold corresponds to, "well, no kidding personal and company journals will report different things, and the differences will be defined and shaped by culture." I know, I know, just because something seems common sensical does not mean supporting evidence should not be sought. Perhaps common sense is what needs such testing most of all. Yet I can't help but wonder, is the interest here partly related to the encounter with clear evidence that a white man had a culture and adjusted his behaviour according to it, not just with respect to his clothes or how he sipped his tea, but also with respect to how he wrote up documents for his employer? Another fold could be labelled, "honestly, how many more pseudo-fields can people who think they are white invent so they can continue staring at their navels?" A person could point out that rereading Peter Fidler's journals together in this way has revealed new things. I wouldn't deny that by any means. Rereading often repays us in this way, especially when rereading older documents from time periods and places other than our own. Each time, if we are lucky and not too tired, we notice things that had escaped us before maybe just because we were tired before, more often because we are able to bring new perspectives to what we are reading. Many "classic" novels have this quality, and a key factor in the popularity of J.K. Rowling or J.R.R. Tolkien's books is that they have so much detail and secondary world building behind them that they mimic this feature of non-fictional writing like that in journals and logs. Still another fold is a bit scrunched up. At one corner is my appreciation of linguistic inventions, and thinking that often a step towards making something approachable is to make a name for it. At the other corner is skepticism of this very habit in this case, because it seems less like a tool to think with, and more like a tool to colonize with. Somehow in that article, agnotology doesn't seem to be helping with questioning how Fidler's carefully selected elisions helped reconstruct "north america" as empty. He deleted references to Indigenous people, and reconstructed all the living beings as what in today's parlance is called "resources." That is more than the article I am citing was meant to do within its word limit, to be sure, and it is possible, even likely that that is the next step in the series it is part of. But what does slapping a new label on studying the social construction of knowledge really give us? After all, isn't that the original point of constructive post-structuralism, which also emphasizes the social construction of knowledge, meaning what people get to know and what they don't? Then again, agnotology is just one word and seems to have a much clearer definition overall than "post-structuralism," which doesn't have a widely accepted set of definitions so much as a definitions from several different fields that apply it. They have a "family resemblance" to each other, as Wittgenstein might say, but they are not identical. On top of that, agnotology was invented by a person engaged in "science" rather than those pesky humanities where post-structuralism tends to live. Which suggests some interesting politics behind the proliferation of the term. (Top) Electric Scooters (2018-09-18) Electric scooters and their relatives are proliferating ever faster these days. In principle they sound like they should be good things, needing less parking space, quieter, lower emissions, and there are types that charge the battery when coasting down hills and the like. There are retrofitting kits to add small electric motors to regular bicycles. In terms of making cities more livable for people rather than carefully modified parking environments for cars with internal combustion engines, this sounds pretty good. Collisions and similar between people and electric bike riders are likely to be less destructive to everyone concerned, since the vehicles in question don't consist of at least 500 kilograms of metal plus other stuff. So my sarcastic perspective on them aside, it must seem like a win-win all around. Obviously it isn't, or I wouldn't be writing this, and not too long ago it would never have occurred to me to. Alas, my mind has been changed rather firmly on the subject. I commute primarily by foot to my places of work, and on a lovely day in midsummer I was doing just that, taking a long-way-round route because the weather was fine, but mostly because I was still a bit prone to getting lost, because I had just moved into that specific neighbourhood. Everybody knows how this goes. Walk close to the edges of yards where there is no sidewalk, as is typical of the 1960s era suburb I currently reside in. Cross safely to the next block, and then thread the path among the various buildings to my office. On this particular route, it is possible to walk the whole way on sidewalks, you know, those things people are supposed to walk on, and one significant stretch runs along the edge of a parking lot. That turns out to be a problematic stretch as I discovered. Strolling along the sidewalk, I noticed another person walking along behind me who seemed to be a bit puzzled about me, which was strange. But I was on the sidewalk, and my music wasn't up too loud, being as I was walking outside. It was a surreal experience to me to run into a person who wanted good jogging headphones, and furiously took back the set analogous to mine because he could hear traffic over his music. He was so annoyed he told me all about it when he saw mine. Anyway, as I was about to leave the parking lot section of sidewalk behind, a person rode up to me on their electric scooter, from behind me, and proceeded to loudly berate me for walking on the sidewalk and getting in their way. To their mind, this was because I couldn't hear them. Now, that may actually be true, not because my music was up loud enough for that to be an issue, but because actually, the quietness of electric vehicles is a bit of a safety issue. By now, we are all used to dealing with internal combustion engines that make noise well ahead of their arrival, and that is helpful for safety purposes. I did ask this person why they were riding on the sidewalk, because I was quite surprised to see them there. As a person would be, since motorized vehicles including electric ones, are supposed to stick to the road. Nor do I know why this person saw fit to ignore the guy who was walking a couple of feet behind me. Then again, maybe he wasn't wearing headphones. Perhaps in another thoughtpiece I will chronicle the amazingly rude things people will cheerfully say if they notice you are wearing headphones and they deem you to be younger than thirty, so they feel licensed to insult you and inform everybody around you what a rude person you must be. (Irony is lost on folks inclined to make such comments, I know.) Anyway, said scooter rider having harangued me then set off, still riding on the sidewalk, and headed off through the midst of a pedestrian intersection full of students and academic staff heading in all different directions. Admittedly I had mostly forgotten about this. It was an annoying experience but not a hill to die on, and interestingly the local police began ticketing people who were riding their electric two wheeled vehicles that were not retrofitted bicycles rather vigorously a couple of weeks later. What brought it back to mind was beginning to stumble on articles discussing the looming clash between pedestrian rights and the rights of people riding electric bicycles and their close cousins to ride safely. I don't actually agree that there is a clash here, or if there is one, it isn't about pedestrians versus people riding motorized vehicles. Rather, it is between people who feel entitled to take over any space they like as soon as it suits them once they are riding on or inside a vehicle driven by something more than their own arms or legs. Many of us at least know of somebody who is mild mannered and fine to be around, until they get inside their car. Inside the car they seem to enter a world in which they are soldiers perpetually hopped up on angry pills, driving a massive tank and forced to move about within a dreadful, slow-moving stream of vile horse-drawn model T fords. They rant, they swear, they threaten outrageous acts of so-called "road rage." Perhaps we originally knew this person and then hastily retreated. In any case, they seem to feel once behind the wheel, that the road belongs to them, and since they now get to most places they'd like to go in far less time than a day's walk, how dare there be anything or anyone getting in their way. I do appreciate how frustrating it is to realize that but for rush hour, you could have been wherever you were going an hour ago. I've been there more than once when commuting in larger centres by bus. But being on a motor-driven vehicle does not give anybody eminent domain over the road and any sidewalks on either side of it. I'm fairly sure this isn't taught as part of the licensing process for motor vehicles, so people must be absorbing this attitude from observations of other drivers and riders. On top of that, I suppose that the electric scooter riders may feel a special annoyance when they can't take as full advantage of the size of their vehicles as they would like by riding far closer to their workplaces for instance, and locking up at the bike rack. All of which really is too bad, because electric scooters and similar vehicles have a lot working in their favour. But I suppose as long as there is so much by way of advertising and the behaviour of other people reinforcing the idea that pedestrians are contemptible for using their feet to get around and taking up space to do so, then it will be difficult to avoid unpleasant encounters. Even if the pedestrians in question forgo all pretence to listening to music players, walking on anything but a sidewalk, or walking anywhere near a parking lot. (Top) Parades Versus Marches (2018-09-11) Today's foray into the OED is all about marches and parades. After all, there are plenty of parades to go around these days, although not near so many marches. Since armies do quite different things when they march than when they are on parade, the messages of these two ways of walking around in groups are far from the same. A quick perusal gives the following paraphrased definitions: "parade, a public walk by an ordered group of people, for display, inspection, and/or celebration" and "march, walk with a regular or measured tread, which may express determination; force someone to walk somewhere quickly." "March" includes the following definition which I am quoting directly, "walk along public roads in an organized procession to protest about something." This one draws out an important detail, that parades can be in private or otherwise controlled spaces, military parades for inspection being a good example. Marches, on the other hand, are public. That's part of the point – there is little point in expressing determination by the way you walk if there is nobody there to see the act. Armies march in hopes of intimidating whoever they are being sent off to fight or abuse. UPDATE 2020-08-17 - I just bumped into an excellent point in a lesbian-friendly discussion forum pertaining to this thoughtpiece. The commenter noted that at one time "pride" wasn't "pride," but "gay pride" and the modifier "gay" was one of the first things lost as the corporate takeover and normalization of the marches into parades happened. This is such an important point, and one that I didn't realize I had missed. If there was a debate or protest about this change, it certainly did not get the same coverage as the new-style parades. I think it is no coincidence that nowadays there are no "pride marches" by gaymen, bisexuals, and the few lesbians they allow into the procession. They are fully corporatized now, all about presenting displays, especially branded ones, and providing a venue for various types of virtue signalling by politicians, and obnoxious behaviour by mostly men claiming to be "sexual liberals." There is nothing preventing a march from including a celebratory element, but since the whole trajectory of "pride marches" has been guided by people devoted to access to the current status quo rather than changing it, it is no surprise that the notion of celebration is emphasized. That, corporate sponsorship, and merchandise, of course. Like any other standardized parade, these are hedged about by permits to walk only along specific streets during certain times, managed by all manner of volunteers expected to serve as parade marshals and make the space safe for moneymaking. Holiday parades have long been co-opted into tourism draws, and "pride parades" are far from exceptions on that point. The photograph is of lesbians marching in new york, and I can assure you from that photograph and accounts in various books and held in archives on-line and off, that the lesbians in questions were having all manner of fun. They were celebrating working together to do something important to them, and feeling the joy of trouncing the constant pressure to be invisible and the continuous efforts to erase them from history, from any mainstream records, and frankly, from life itself. They were walking on public roads, with no concern for permission from police or city hall to do so. The whole point was at that march and in many others, to express a determination to refuse invisibility and oppose oppression. Protest was an absolutely necessary part of the process. Dyke marches are part of this phenomenon. Contrary to the current attempts to redefine them as venues for men claiming they are lesbians to walk around openly carrying weapons to attack women with in general and lesbians in particular, they were founded as and properly are marches by lesbians, adult human females whose sexual interest is in other adult human females. Especially lesbians who cannot pass as heterosexual, the sort of lesbian who today "transactivists" want to redefine as "transmen" and insist they should be taking hormones and getting surgery accordingly, or demanding that they should have sex with trans-identified men instead of actual women. There's another important difference between marches and parades, besides political ones. There is no way to have a plausible parade without a significant number of people, enough to take up significant space. A plausible measure is whether a group of people intending to parade can fill up at least the width and length of one lane of a regular city block, so a downtown block, not one of the megablocks in an industrial area. That can still be as few as fifty people, before adding any floats or other accoutrements to take up more space and increase visibility. A march on the other hand, can be as few as five determined people with signs, if they select where and when they march with care and strategy in mind. Every avalanche starts from a few pebbles or a few rivulets of snow. (Top) Misconceptions About Women's Suffrage (2018-09-04)

Canadian Women's Suffrage Delegation on its way to participate in a 1913 demonstration in washington, dc via Women's Suffrage and Beyond, original in the toronto star 4 march 1913.

With voter suppression so rampant in much of the world, including canada and the united states where people are encouraged to believe that sort of thing can't happen, it is hard not to spend time thinking about suffrage and its meanings. When it comes to women's suffrage in particular, I have to admit to getting more and more fed up with the misconceptions about when women won suffrage and what that has meant for women more broadly. Let me repeat, wherever women have suffrage, they won it. They weren't given it as a grand, magnanimous gesture. The right to vote can no more be given than can the right to self-determination. Which means that what women have had to win in so many places, is the enforcement of respect for their rights, in many cases re-enforcement after a recent change that blocked them from voting. This is one of the most critical misconceptions about women's suffrage. Here's another one. UPDATE 2020-06-24 - Further to this thoughtpiece, have a look at Misogynist stereotyping in history, Part I: Those prudish Victorian women at culturallyboundgender. It provides a great overview of just what victorian era women were up against when it came to social and political activity outside the home, including of course for the purpose of fighting for suffrage. I am astonished at how often other people are sure that women have had the right to vote for pretty much ever, and it is an ordinary thing. Universal women suffrage reached its 50th year in only 2010 in canada. Full federal suffrage for women in canada as long as they were white was won 24 may 1918, and that date went completely uncelebrated by the federal government, which unfortunately should surprise no one. The racial exclusions of women and men from the federal vote didn't end until 1948, and women generally remained unable to vote provincially even longer. The last provincial hold out was québec, which legally prevented women from voting until 1940. The overall point here being, just in canada, women's suffrage is literally a recent enough win that many young women just old enough to vote today have great-grandmothers who couldn't legally cast a ballot. I should also add that women were not officially "persons" in canada until 1929. Yet another misconception about women's suffrage, regularly fallen into by women who entered the campaign for suffrage without any analysis of systemic oppression, was that once it was won, they could stop working against oppression of women and go home. If the vote by itself could be used to end structural oppression, as Emma Goldman noted, it would surely be illegal for anyone interested in ending structural oppression. Votes are important, and can be applied in strategic ways, especially if the electoral system is not corrupt with gerrymandering and voter suppression, among other problems. Voting can still be strategic even under those conditions, though what it can be applied to is not the same. The Feminists who had an analysis of structural oppression under their belts had quite a different view of suffrage campaigns. The campaigns were not an end at all in their view, but a beginning. They served as an introduction to political and social campaigning, from pamphleting and public speaking to peaceful civil disobedience and strategic property destruction. They showed that change came not all at once in a rush, but via stubborn and steady work to keep the pressure on and refuse to allow injustice to be reasserted by alternate means. A sort of "gateway issue" for that period, as activism against violence against women often is now. Perhaps it is the fault of the tragically mixed bag that is disney's rendition of P.L. Traver's Mary Poppins, in which the Sufragettes are presented as frivolous rich women who aren't too sure what they'll do with the vote, just that they want it. It's bad enough that when upper and middle class women do take part in activism that they are often criticized for not immediately winning complete and total success in whatever they are working on, but then they are assumed to be engaged merely because the rest of the bridge club is too. Critique on the basis of not insisting on universal women suffrage is fair enough, but parroting dumbass stereotypes or presenting them with a doublebind, damned if they don't work for change because that means they are shallow and uncaring, damned if they do because they are shallow and uncaring, not committed. Unfortunately, this sort of junk is not just a feature of 1960s disney movies. In real life as we know, the people who take part in activism of any sort have all manner of commitment levels and analyses of what they are activists about. That is to be expected. There is one more misconception I should note here, and that is the notion that women won suffrage by polite picketing, marches and a few speeches, something like multiple iterations of the activities like that in the photograph illustrating this piece. This was far from the case. The women who took part in gruelling speaking tours had to deal with hecklers, being pelted with debris which if they were lucky did not include rotten fruit or shit, attempts to prevent them from speaking by refusing to rent them halls or declaring outdoor gatherings for speechmaking temporarily illegal, and even physical assault. Civil disobedience actions, including chaining themselves to furniture and parts of the architecture of parliamentary buildings could lead to arrest, which was and is none too gentle, and subsequent fines and time in jail. Depending on the immediate circumstances, jail may be more or less dangerous. More dangerous if police are allowed to abuse prisoners with impunity, or women are deliberately locked up with imprisoned men who will be a danger to them. Or both. Readers are free to look up the english suffrage activists who went on hunger strike in prison, and were tortured by force feeding using rubber tubes forced down their throats and into their stomachs. And yes, some did indeed engage in targeted property destruction. (Top) The Nineteenth Century Blahs (2018-08-26)

Bill the Cat by Berkeley Breathed in his comic Bloom County, june 1982. If you haven't read Berkeley Breathed's Pullitzer prize winning comic strip 'Bloom County,' you're in for a treat.

Practically speaking, the nineteenth century would have a lot to answer for if it was a person, based on the way it is usually constructed in terms of general impressions. Barring the steampunk writers, who bounce between depicting it as the best century every and imagining alternate paths out of it (to my mind by far the more interesting subcategory), there seems to be a general consensus in the mainstream at least that nineteenth century was awful, and anything that came out of it was terrible. Supposedly all the things identified as "sexual hang ups" are all due to people in the nineteenth century, especially those infamous victorians. Internal combustion engines, of course, early computers, and it seems that queen Victoria's many children were on all sides of every european war in the twentieth century, having married into "royal" families all over the place. What this actually tells us about "royals" is besides the point in this way of looking at things. Certainly the origins of the engineering and managerial mindsets I have written about before could be pinned on the nineteenth century, and sometimes is, although this strikes me as a serious oversimplification. In any case, the point is, we have a particular construction of the nineteenth century that is hegemonic in at least the english-speaking world. It is apparently derived from the novels inflicted on many hapless students who have suffered through "english" classes that in origin were developed and imposed on english colonies, especially india, in effort to pretend that only english books were real books. So we have a strange, skewed image of the nineteenth century in general and victorian england in particular via Dickens, Richardson, and a few carefully curated women including Eliot, Brontë and Austen. There was may more to read and far more going on than these few could ever encompass in their books and articles. Bearing this in mind, what things actually did come out of the nineteenth century, besides the ones just mentioned? Quite an interesting crop, to be sure not all good, but not necessarily what might be expected. In england, women finally won the right to vote and an increase in the number of women's public washrooms, facilitating all women's participation in daily life outside the home, and especially benefiting the poorest women, who needed to work outside the home whether they wanted to or not. The slave trade officially ended, and although that was far from the end of slavery, alas, wrecking the acceptance of it as somehow okay or inevitable was an important step. The "contagious disease acts" in england, which in effect defined any woman out in public as a potential prostitute who could be arrested and imprisoned, were finally repealed. Legislation for shorter work days and an end to child labour was first enacted in this century as well, though again, it didn't promptly end child labour or unreasonable working day lengths. That was a continuing fight, but this was a century of important successful steps to better conditions. The english practice of allowing the bodies of the destitute to be turned over to anatomists for dissection without their consent rather than allowing them to be buried decently ended. All of these things happened in england, which being at the centre of a powerful empire at the time, had considerable political and cultural influence as a result. I live in an officially "former british colony" so these are among the easiest examples to find. In the settler state of canada, things were not going so well for everybody. The nineteenth century was after all when John A. MacDonald and his patronage appointees were busy overseeing the mainly violent takeover of the rest of what today is labelled "canada" on maps. Periodic smallpox epidemics were still pulsing along the fur trade routes, although finally vaccination was travelling along them as well, and the terror of the disease began to abate as the century drew to a close. The first big canadian "tech craze" happened in this century, the advent of the bicycle, especially once it reached the shape with two same-sized wheels and soft tires originally referred to as the safety bicycle. Everyone who could was taking up bicycling, especially women, who could afford them even if they had lower class incomes, and these facilitated their ability to travel on their own and go to work and school. And let's not underplay their determination on this point, because they were successfully riding pennyfarthings in long skirts well ahead of the safety bicycle's development and sale. Before that, early cars had won many buyers among women in the middle to upper classes for similar reasons, but they didn't take off in the same way. Bicycles helped buoy up the Feminist drive for dress reform, which of course was huge across much of northwest europe and britain. It is forgotten all too often that this reform was all about making it socially acceptable for women to stop wearing clothing and shoes that were doing them active injury. The resultant simplification of their clothing actually led to further simplification of men's clothing as well, although in the end this may have had as much to do with mass production as political activism by the end of the century. So by all means, the nineteenth century was a mixed bag in terms of what got handed on. But when we get fed lines about sexual mores or technology changes coming from it and supposedly synonymous with whatever we are supposed to not like or want, it is worth checking the facts. It isn't even hard to today in this age of widely accessible public libraries with those expert research guides, librarians, in them, who can help you get the most out of the internet on claims about the past, let alone the present. (Top) Identity Politics Are Bullshit (2018-08-19)

There's a meme for everything, but some of the ones for this phrase were actually scary. Image courtesy of oph3lia on cafepress.com, march 2015.

UPDATE 2019-07-06 - I have encountered an excellent encapsulation of the overall point here by Julie Bindel, from an article published in 1996 just as the whole move to post-structuralism, post-modernism, so-called identity politics and backlash against women's rights started. "By identity politics, I do not mean fighting institutionalised oppression, such as racism and classicism, but using one's identity to silence and instil fear in others, rather than fighting for change." ("Neither an Ism Nor a Chasm: Maintaining a Radical Feminist Agenda in Broad-Based Coalitions," 247-260 in All the Rage: Reasserting Radical Lesbian Feminism, edited by Lynne Harne and Elaine Miller, Teachers College Press, New York 1996. UPDATE 2020-05-20 - It is disappointing but instructive to learn that "identity politics" started from a very different place, as one of the original developers of the notion, Barbara Smith has explained in recent interviews and writings. An excellent way to start delving into this is Olúfẹ́mi O. Táíwò's excellent article signal boosted at the black agenda report, Identity Politics and Elite Capture. Don't miss the powerful analysis of elite capture of system challenging politics and activism before going to read Barbara Smith's explanation of identity politics. UPDATE 2020-06-01 - For good or ill I have another update, and will probably have to write a follow up to this thoughtpiece. An unexpected place to learn about the politics of identity and the specific cultural meaning of identity for people who think they are white in the united states, and I suspect also elsewhere with somewhat different specific mechanics, Barry Spector's overview article Affirmative Action for White People first published at black agenda report in 2015 and revised 8 april 2020 is a solid place to start. UPDATE 2020-06-23 - Okay, it seems there is at least one more update to go here, this one to reference an article by Janice Williams at uncommonground, Transgenderism and the Difference Between Behaviour and Identity. Quite apart from the transgenderism question, she makes an intriguing argument for defining identity based on aspects of life that a person could not choose to leave behind or dissociate from of their own accord. By this logic, skin colour, nationality, and sexual preference would define identities. The things a person can leave behind of their own accord, argues Williams, are simply behaviours, and these can be changed at any time, though the impacts of a given behaviour may last a lifetime regardless of whether a person persists in it. So if a man or woman happens to have an appearance more associated with a gender stereotype they are not "supposed" to, and they cannot simply change it, then that is indeed their identity. Whether they decide to double down on finding a way to conform with gendered stereotypes anyway is a behaviour. But changing the behaviour does not alter the oppressive structure if it reinforces it via expressing conformity with gender stereotypes. UPDATE 2020-08-21 - Jo Bartosch is a scintillating journalist and social commentor overall, but I think her points here from Pups, Furries & Kinksters have no place in Pride: Homosexuals and Bisexuals Need to Unite to Put Fetishes Back in the Closet published at thecritic.co.uk, are an important addition here. "Today there is a balance to be struck; identity is a matter of personal choice but when one's fetish depends upon an audience or public validation "choice" becomes a social matter. Consent should not be assumed, we have a right not to be made bit players in another person's public fantasy.... Critics are warned not to "kink shame," and told that questioning public celebration of fetishes is evidence of a sexually repressed and closed mind. In stunning social volte face, shame is reserved for those who question the right to exercise one's fetish in public. But shame has a useful and protective social function, and when uncoupled from homophobia it is not necessarily wrong to judge those who indulge in fetishes that might be harmful to others. It is high time homosexuals and bisexuals united to push fetishes back in the bedroom closet where they belong." Seriously, but not literally. If Identity politics were literally bullshit, then we could at least use them for something genuinely useful, like fertilizing the garden or other practical applications. I believe there is an ongoing project in small scale methane generation from cow dung more generally to fuel cooking fires in parts of africa where cows and people have been helping each other keep body and soul together for millennia. But here, let me clear to start with on what I mean by "identity politics." Clear and honest definitions are too rare right now (hence my perhaps quixotic fondness for the OED), but I see no reason not to set my cards clearly on the table. By "identity politics," I refer to a mode of rhetoric and hyper-liberal reasoning that is tightly focussed on individual feelings and individualized "solutions" that enable the individual in question to make peace with structural aspects of social and political life. It would be interesting to be able to point to harmless applications of "identity politics," but they seem to be extremely thin on the ground. In other pieces on the Moonspeaker, I have referred to individualized modes of problem solving, especially as applied to handling issues raised by social and political structures, as "taking the pressure off." The trouble is, it takes the pressure off one or a very few people, while simply leaving the structure in place. There is no assessment involved here, no consideration of such questions as, "What purpose does this structure serve? Who benefits by it? What outcomes derive from it?" Not necessarily in that order, admittedly. This is not the same thing as actions that for example, help a woman escape from an abusive partner, or a political dissident escape imprisonment. Wildly diverse as these two examples may seem, the point is the difference between an action that helps a person handle a primary emergency that endangers them, and actions that are directed toward increasing comfort or some other nice to have but not necessarily challenging outcome. The funny thing about actions directed towards increasing comfort or some other nice to have but not necessarily challenging outcome, is that they are not necessarily harmless. Especially when the actions under consideration may or may not impinge on the workings of a social or political structure. I should probably also note what a social or political structure is. First, these are not just one person things. My personal politics, or the way I run my household, do not constitute either a political structure or a social structure. A political structure is for example, the various parts and pathologies that go into representative democracy as set up in north america. So think political parties, voting procedures, voting qualifications, and so on. Structures have lots of interlocking parts. It's harder to give tidy examples of social structures because so often the people living within them are unable or unwilling to see them so long as the social structures are not troubling them in some way. That, and in fact social and political structures are not completely separate. But for the sake of illustration, the complex of ideas that construct Indigenous people as less than human and bound to go extinct any time now, plus the actions non-Indigenous people take in the expectation that these ideas are true, are part of the racist social structures at work in north america. Maybe it would be better to call these socio-political structures. Still, it is clear that these are human constructions made and enforced via a consensus of thought and action between a critical mass of people. The consensus needn't be active, it need only be effective – lack of opposition of any kind is effective consent. There may be just or unjust, wanted or unwanted social and political structures. But because they are products of group thought and action, individualized critiques or approaches to dealing with them are pointless. All an individualized response or tweak to such structures does is create a special kind of exception for one or a few people meeting very specific criteria that uphold the status quo. This might sound like a good thing if we mistake an exception for a rule, which an exception is explicitly not. That means it can be withdrawn at any time, and the structure in question remains in place unchanged, and perhaps even stronger than ever. For structures that are just and helping make things happen that are positive, that can seem mostly okay, especially for unconscious exceptions that are unfair that people didn't realize they were making. An easy one to point to would be any change that increases democratic effectiveness and accountability, and efforts to ensure that polling stations are useable for persons living with disabilities. It's much harder when it comes to unjust structures, because they are unjust in order to benefit somebody, and that somebody is probably going to resist changes in anyway they can. Maybe that somebody actually includes people who want exceptions, because they argue that they are exceptional. Their presumed exceptionality is supposed to be an argument in favour of the exception, because the people qualifying for it will be limited and it won't change anything much. I get it, this sounds abstract. Here are a couple specific, real life "identity politics" exception arguments. They affect and affected communities I am part of. During the 1980s and 1990s, a significant number of gay men argued that they couldn't help themselves, they were born homosexual, therefore they should not be stigmatized or punished for engaging in same sex relationships. They pointed to their stable relationships, regular jobs, and standard consumerist behaviour, and firmly insisted that bar culture and bath houses was either a fringe sort of thing or a sort of youth stage that every gay man grew out of. They were and are regular guys! Certain lesbians opted for similar tactics in the 1990s and early 2000s, although in that case it had a lot more with insisting that lesbians could look like straight women and were willing to perform standard femininity. It sounds like it should be okay to apply these arguments, and it seems everybody accepted them out there in the mainstream. Never mind that every person, gay, lesbian, or simply disinclined or unable to perform gender stereotypes got shoved firmly under the bus. But, then again, that is to be expected, right? Exceptional people are always a subset of the larger group. The people that win the exception get to be more comfortable and access more nice to haves. Meanwhile, the socio-political structures predicated on gender stereotypes, enforcing them, and limiting people's life chances based on them, remains as firmly in place as ever. I suspect that there are people who feel that "identity politics" could ultimately yield significant changes to social and political structures by sheer mass. The number of exceptions could finally become so many that the whole thing comes crashing down, and so unjust structures are overcome once and for all, and just structures are improved. Maybe that works for just examples, if we can be sure they are just. But when it comes to entrenched unjust structures, "identity politics" are bullshit. All they do is divert energy and co-opt people who would otherwise be in a position to fiercely and effectively resist until the unjust structure had to change or be destroyed all together. "Identity politics" can persuade us to accept a bit of candy or other nice goody to shut up and go along, unless we are careful, because it seems easier, faster, more comfortable, and appeals to our feeling that the people that get tossed under the bus aren't very much like us anyway. And they have the added sting that it feels lousy to have it pointed out to us that we have allowed or even pushed somebody else under the bus. So if we have become embroiled in "identity politics," it can be very hard to get out, because it is hard to live down having done something that turned out badly for others or even ourselves. That's how complicity works. And that, in rather more than a nutshell, is why "identity politics" are bullshit. (Top) The Victorians Were Not Prudes (2018-08-12)

A victorian era religious official, who would usually be characterized as a 'prude,' june 2018. Steampunk art from the Dover Steampunk Sourcebook, 2010.

Just in case anyone is expecting this, I will not be discussing Foucault and his repression hypothesis about victorians and sexuality. I'm not wholly sympathetic to him, so it best to read his stuff for yourself. Instead, as usual I start from my ever useful OED, which reminds me of the similar etymological tracings undertaken by Feminists like Mary Daly, Jane Caputi, and Julia Penelope, who looked more deeply into the word "prude." It's an oddly shaped word, with spelling strongly indicative of a change in pronunciation that managed to get caught in english orthography. This is a vanishingly rare occurrence in the english language. Anyway, thus spake the OED, "prude, a person who is or claims to be easily shocked by matters relating to sex or nudity." It adds in the etymological section that this word is a back formation from french "prudefemme" meaning originally "worthy woman," from older french prou that simply meant "worthy." The semantic derogation of positive terms for human female beings into insults is well documented in english, even though most work on it has stopped since Julia Penelope's passing a few years ago. UPDATE 2020-06-23 - I have made some light updates in this piece to remove an accidental repeat of incorrect information about the five women generally best known today as the victims of "Jack the Ripper." There is in fact no evidence that any of these women except one had ever been forced to sell sex to survive. Their names are Mary Ann "Polly" Nichols, Annie Chapman, Elisabeth Stride, Catherine Eddowes, and Mary Jane Kelly. To see the receipts on this point, Hallie Rubenhold's 2019 book The Five: The Untold Lives of the Women Killed by Jack the Ripper is a tour de force. Rubenhold is able to bring together remarkably detailed pictures of these women and their lives, including a genuine sense of their complexity and the struggles they faced in the precarious working classes. I think Rubenhold makes a powerful point in showing how merely being poor rendered these women "expendable" in the eyes of many people in more secure economic circumstances, and presuming they were prostitutes rationalized away this dehumanization. UPDATE 2020-12-07 - Now some readers might object here, and insist that maybe victorian men were not prudes, but victorian women were. This claim is total nonsense, and derives from misogynist stereotyping. One of the best unpackings of this nonsense is at the blog CulturallyBoundGender in Misogynist stereotyping in history, Part I: Those prudish Victorian women. It is easy today to sneer at victorian women for apparently being terrified of sex. But the sad truth is they had reason. Between cultural practices that meant many women faced one of the most dangerous potential outcomes of sex, pregnancy, in a state of malnourishment, lack of physical fitness and at times already ill with tuberculosis, the all too likely final outcome for pregnant women was death. Without access to contraception, victorian women were entirely rational if they feared and wanted to avoid sex. Just on a basic practical level, no, the victorians, citizens of england between 1800 and 1901, were not easily shocked by matters relating to sex or nudity. How is it possible to be so sure? Have a look at the most famous "shocking events" of this period that were used to flog newspapers in an early modern iteration of yellow journalism. The murders by serial sex killer "Jack the ripper," one of several serial murderers preying on impoverished women in this period, for instance. It is easy to find reams of advertisements for devices intended to punish and prevent sexual response and coy references to so-called "female complaints." This is the same period in which Rasputin won fame as a prominent example of an antinomian christian whose charisma was as much sexual as religious. It also bears pointing out that this was an era of resurgent evangelical religion and the attendant uncomfortable reminder that religious enthusiasm and sexual enthusiasm are not separate from each other. This was also a period that includes many examples of obsessive labelling and categorizing as part of the general colonizing push of the english government and military in the period, with all the attendant anxieties about control. That meant not just military control, but social control to prevent "miscegenation" between "whites" and "lower races" and what was framed as "degeneration" but had more to do with fear that "whites" would begin to respect and sympathize with the peoples they were expected to enslave. So far, I can't find examples of people being shocked per se, or even seriously claiming to be shocked, about sexual behaviour or sexualized violence. More often than not, it seems to me they expressed shock at these being mentioned "in polite company" if they were not "lower class" people. Oh, and there was plenty of pornography around, and various attempts to define any woman or girl found walking outside in public alone or seemingly alone as a de facto prostituted woman or girl who should be jailed and examined without her consent for disease. "Prostitution" was a major issue because a significant portion of men were obsessed with somehow locking women and girls up permanently and thereby ending such key efforts to end their power to oppress women as the campaign for the vote and separate female personhood from males. Of course many white women did manage to win the vote through the nineteenth century at least in north america and england, and brought down the so-called "contagious diseases acts" intended to lock all women in their homes too. Sex as such was not the central matter at issue. The availability of "lewd books" and similar materials that today are considered laughably tame, hardly drew the same level of concern. Victorian era claims that "the lower classes" were commonly "living in sin" rather than marrying have now been firmly debunked, and again, such claims had and indeed have more to do with a desire for increased social control among those making the claims in mainstream outlets. The key detail to check is always what the proposed solutions are, because the campaigners most amplified by the popular press primarily advocate increased surveillance and control of where people can go and what they can do with their time. And yes, as Foucault also wrote, the rhetoric in the cheap broad sheets, newspapers, and political speeches by members of parliament should not be simply conflated with or interpreted as peoples' actual feelings and behaviour in the victorian period. All of which begs quite loudly then where this idea of victorian prudery came from anyway. From what I can tell, people living in england today do not particularly subscribe to this construction of their ancestors at all, whatever their social position and experience. In my perambulations through my reading for classes and unsystematic perusal of books of literary criticism working with literature from that era, the common themes that come up are quite different. Various writers reflect on changes in the prevalence of openly expressed and performed religion, the changing role of clothing as a marker of social status and conspicuous consumption, and the struggles people had with the changing status of women and children. I have read down to earth explanations of how yes, people in england and anyone living in a similar climate and cultural milieu did wear a lot of clothes. But this had nothing to do with sex or perceived potential sexual provocativeness, and everything to do with lack of central heating and damp, cold weather. People wore a lot of clothes if they could because otherwise they were cold. Servants couldn't openly talk about "unmentionables" around their "betters" because that was how they were expected to perform acquiescence to their supposed inferiority. Which means that whenever we read or hear of victorian era – or any era, really – shock at sex or nudity, we need to check the context. Of course there can be good reason for such a reaction, but with so much tied to expected behaviour and performances expected that would shore up the status quo, we may not be getting what we expect or think we know. As always, there are plenty of great books and websites about the victorian era that are worth your time if you'd like to see something other than caricatures of people in that time, especially if you happen to live in a place recovering from english colonialism, which peaked in that very period. Online one of the best and most venerable sources is the Victorian Web, chock full of wonderful stuff, including sound files, images, essays in plain language, and links and references to additional sources. It is also well worth having a look at the surviving steampunk websites out there, as they often include thoughtful discussions of culture and mores in victorian england. (Top) The Question of Efficiency (2018-08-05)

Gear image from the northjersey.com website, associated with a notion of efficiency, circa june 2018.

Here is another word exploration thoughtpiece, which I thought would end up being quite short. Except as it turns out, the insistent visual association between interlocking gears and the terms "efficient" and "efficiency" surprised me. Perhaps it shouldn't have, because of the current fetishization of fuel efficiency in machinery. Gears commonly serve as metonyms for machines, especially ones now considered rather old-fashioned because they don't have a computer shoehorned into them somehow. On the other hand, I can say honestly that my surprise reflects the fact that in my more immediate experience, "efficiency" and "efficient" are invoked in relation to human behaviour and that other new age shibboleth, "productivity." Both terms are, alas, problematic, even more so than they might otherwise be because language never stops changing because they are encoded with such powerful and destructive presuppositions. In the efficiency-efficient dyad, the definition and application of the term now depends on a demand that we accept that humans and machines not only can be made equivalent, but that it is morally right that they should be. That demand is no longer covert for anyone, although I doubt it was ever covert for anyone working in so-called blue collar and pink collar jobs. Still, let's drop back to the information in the trusty OED, which is trusty because it is written on historical principles and that means the compilers are making an effort to reflect the real world usage of english words. "Efficient" has three senses in the standard edition of the dictionary I have, "achieving maximum productivity with minimum wasted effort or expense; a well-organized person; preventing the wasteful use of a particular resource." This all sounds quite dry and clinical, right? Except, wait a moment. Productivity of what? Whose effort and whose expense? How are any of those things measured? A person well-organized for what? What makes the use of a resource – whatever we may think that is – wasteful? These aren't intended as rhetorical or disingenuous questions. Each one is intended to draw out the presuppositions in these definitions. They are certainly rooted in the late 18th century as my OED also notes, and that is the all too familiar era of early capitalist expansion based in the use of machinery to replace skilled labour and drive people out of subsistence work into the working class. I have already written in a previous thoughtpiece called Mixed Bag about one aspect of this period, the development of engineering as a profession and its pessimistic (at best) view of human nature. The engineering and management expectation that machines obey and workers ought to behave like machines truly comes from this period, even though it is also in many ways a modernized version of Aristotle's wishful thinking definition of slaves as tools that can think, but preferably not too much. If the image associations I found while looking for a bit of clip art for this thoughtpiece are any indication, this disrespectful view of human beings has been propagandized all too well. To me at least, the question of efficiency goes right back to efficiency in what. The conglomeration of economic structures and jobs right now that practically all humans are caught up in right now is remarkably good at producing toxic waste and not much else. Lots of plastic and related hydrocarbon products, waste heat, released carbon dioxide, excessively concentrated chemicals, and all manner of pointless tchotchkes that find their way to the garbage in days if not hours. Fidget spinners are a perfect example right now, as the fad for them blows wider and they go on sale three for a dollar. Right now there are factories that are perfectly tooled to switch to producing thousands of little things of that nature because they have been caught up in a fad and so will temporarily be highly lucrative. Adult equivalents hide behind lots of fig leaves, although there is an argument for the successive waves of cell phones, phablets, tablets, and netbooks being one. I think it is fair to ask whether that is actually an expression of efficiency that we want a significant portion of human imagination and effort to go into. If stripped to its barest minimum being efficient means doing whatever activity with the least amount of energy and stuff used up possible, the possibilities for what we could be efficient at are much wider than the usual connotations of the word suggest. (Top) Peace is Practical Work (2018-07-28)

The logo of one of the best podcasts on the web, Métis In Space, Otipêyimisiw-Iskwêwak Kihci-Kîsikohk. What do you mean you haven't subscribed (itunes | stitcher | for other podcast players) to it yet?

I have mentioned the brilliant podcast Métis In Space, Otipêyimisiw-Iskwêwak Kihci-Kîsikohk in other thoughtpieces and a couple of random sites features. It comes in here as well for a more detailed reason, because Molly Swain and Chelsea Vowel have powerfully imagined an Indigenous future that is peaceful and enshrines healthy relationships between all peoples and the land, and between Indigenous and non-Indigenous peoples. Part of their imagining reflects teachings they have received and cousins of those teachings I've received myself, about peace. Peace isn't easy. It doesn't conveniently fall out of everyone somehow becoming too dazed and silly to fight anymore. That it is difficult does not make it either impossible or unrealistic. I mistrust people who claim peace is hard and therefore impossible who then promptly turn around and insist that infinite energy, infinite cash, and continuous space travel for anyone who can pay for it are all possible. Two of those are definitely impossible on the basis of physics, and the other possible and obviously difficult, requiring intensive investment of time, energy, and imagination. Well, if we somehow can find time, energy, and imagination for space travel for the one percent who hope that they will finally rapture themselves away from a difficult situation once and for all, then the issue with making peace has to do with deciding where to put the time, energy, and imagination, not possibility. Perhaps one of the issues with the way making and keeping peace is envisioned is that so often it is presented as a state that once achieved will never end again. If that is the expectation, then no form of peace we experience in our lives will be recognized as "real" because for one or another reason, so many forms of peace are temporary. Yet the expectation is nonsensical in itself, because everything changes whether or not it ends. There are many sorts of personalized peace, such as the quiet hours we may spend reading, sleeping, or whatever. Those are intended to be temporary for the most part, if only for practical reasons. But in the context of peace being hard work, this is not intended to refer to a purely personal peace, but a social peace, a state of social being. A state of social being that is characterized by lack of interpersonal violence and the ability to resolve problems and conflicts by non-violent means in a just and fair way, which generally demands a commitment to talking things out and taking the time to do it. The social aspect is where the convenient pessimism comes in, because it is supposedly too hard to get enough people committed to acting in the ways that create and maintain peace and then doing so for extended periods. This is supposedly too hard because people are inherently bad, and "might makes right" because the bigger or less ethical will always win. Perhaps this could be true if we all believe it, since medical professionals make surprising discoveries about the wild things we can convince ourselves of to the point of meddling with our own physical responses almost every day. I find myself thinking of a well-done scene in the royalist propaganda movie The King's Speech, in which stuttering Albert stutters not at all – so long as he can't hear himself speaking. The hard, practical work of making and keeping the peace takes time. Reading early settler records of their perceptions of Indigenous governance and peacemaking practices, the writers are forever moaning and whining about how much time everything takes. They insist that it isn't efficient, and sometimes even state bluntly that it keeps them from taking whatever they want, which makes them angry. All of which sounds amazingly similar to a badly behaved five year old, and these were typically grown men, many of whom were well-educated and considered upper class in their time. They were annoyed by any resistance to their thieving, invading ways, and they refused to acknowledge or accept that these very ways are what made it possible for them to live on Turtle Island and further south. Not their germs, not their incessant thieving and warfare. The insistence of Indigenous governments and individuals on working out peaceful ways of coexistence. Obviously this did not and does not mean Indigenous peoples never fought back, or that at times Indigenous peoples did not opt for war instead of peace, for a whole range of reasons that we may or may not understand let alone agree with. What to do then, when there are peoples who insist on war over peace, even if that means war far away from their own homes, so they feel like war must be worth the risk. I think we can safely conclude that we resist oppression and do the practical work of peace anyway. There is no other way to survive. If no one Indigenous or non-Indigenous had done this practical work, none of us would be here. Those are the people who win a chance at life for the rest of us in the future, not the latest character claiming that bigger weapons run by robots or brand new space ships will save the chosen few. (Top) Search Patterns (2018-07-21) Search engines are wonderful tools, for all their now more often frustrating than hilarious flaws. Those of us who have been surfing the net since the early 1990s probably all have stories about the startling and funny results search engines could produce in those days. There is a certain irony in how much more AI-like those absurd results seemed, because of course they reflected the mistakes and simplifications made by the human programmers. Not that anyone was talking much about AI then outside of some research laboratories, because this was when reporting problems by email could spawn an email back – from a person. I know, sounds impossible, but that was a thing. And even then, in the "stone age" of the web, the search engines were already wonderful tools. As few as ten years ago, any database with a web page front online was considered part of the now infamous "dark web" because search engines were not yet programmed to deal with them. Now databases are ubiquitous, and can be broadly divided into those that require a login and those that don't, and those that don't are indexed by search engines multiple times a day. But as archivist Emily Lonie points out, research skills beyond search engine use are not taught as commonly or thoroughly as they had been. The combination of the apparent power of search engines and the ever more overloaded school day probably isn't helping ensure students get a chance to learn much more about how to do research even with a library's electronic resources. There are still databases that can only be used on dedicated work stations, many of which have not been updated to run on newer operating systems, so they need to run in a virtual machine. That acknowledged, the main research materials students are not necessarily learning how to use effectively are the non-digital ones, the ones that haven't been digitized or are too expensive to digitize, or cannot be used effectively as a digital scan. The latter category can include plenty of digitizable flat things, especially maps and large diagrams. UPDATE 2019-01-21 - Towards the end of last year I had quite a surreal experience with the latest iteration of the card catalogue and search software most commonly used in public and university libraries in canada. Among the more recent additions to search engine databases are ever more extensive thesauruses packed full of synonyms and common misspellings of search terms so that the software can try to come up with something vaguely useful even if the spelling we come up with is at best, creative. In online search engines the engagement of this feature is usually signalled by a blurb under the search box on our results page to the effect of "Did you mean x? Restrict search to y," where "y" is what you actually typed and the phrase referring to "y" is a link that will run your originally typed search. The library catalogue I was searching did not provide such useful indicators in its search results. Instead, it silently "corrected" my search terms, because the author's last name, though far from obscure, was treated as a misspelling. In the end I had to phone the library help desk, and a librarian and I spent some time experimenting to find out how to route around this behaviour. Many of us interact with libraries, even public ones, primarily via a computer from which we place holds on books that we pick up rather than searching the stacks. So when a question or project comes up that draws us into the library and it turns out that we can't find what we need just from the computer stations, it can be a revelation to discover what else is available in there. For example, the vertical files (storage for materials that don't warrant call numbers), or all those tapes and films that the library often still has the equipment to play. If the library includes one or more archives or sets of personal fonds, then there may well be more than papers and books to look at. There may also be objects or object collections included in them, especially from times and places where young people, especially young women, were encouraged to put together memorabilia of special events. The current iteration of this practice is what goes mainly under the label of "travel notebook," in which the traveller has tucked tickets, wrappers, and small objects alongside their written, drawn, and stamped observations. They can be absolutely amazing, no fancy notebooks and scrapbooking supplies required. This suggests another factor in the growing interest in "the analogue." Before the computerized library catalogue, the tool for getting started navigating the library stacks was the card catalogue, an analogue database. Once a person got used to the way the call numbers related to different topics, whether the dewey decimal system or the library of congress system adopted by most university and college libraries, the catalogue became less necessary except for new topic areas. That is, a person could internalize a virtual map of the library stacks by getting familiar with the card catalogue, and yes, they could take advantage of serendipitous search as they walked between sections. Well, that is, as long as the person in question wasn't just requesting the books be pulled for them by library staff, a service rarely available outside of special collections and institutions like the british library until the 2000s. As Emily Lonie points out in the article linked to above, archives often have their own customized tools for searching them, including online tools that see use all too rarely, even if provided with instructional videos and tutorials. Providing useful getting started information is a difficult art because one size emphatically does not fit all. All too many people bring with them a belief that they didn't have to learn anything to use a search engine, even though they inevitably did, and have made their own choices about whether to just tolerate the search results they get or refine their queries. Ease of learning and lack of need to learn are not at all the same thing, so I suppose that could be rephrased as all too many people underestimate the research skills they have already learned, and that they did put some effort into the learning. That's a real shame. (Top) A Reconstructive Problem (2018-07-14)

Neanderthal facial reconstruction based on the skull of the Gibraltar 2 Neanderthal specimen, image courtesy of the anthropological institute at the university of zürich, 2010. This wonderful photograph is widely reproduced but too rarely with its origins noted, evidently because they were surprisingly tricky to find in 2016. Perhaps they were considered common knowledge, as even Heather Pringle did not mention them in a blogpost from the same year. I finally found the basics of the citation in the abc science article, Humans Interbred With Neanderthals: Analysis, 7 may 2010.

Facial reconstruction is a remarkable development in hybrid art-science applications. It can help in the investigation of crimes and missing persons cases, and add impressive vividness to recreations of ancient peoples. Due to how oriented we humans are to watching and reading the eys of other people so long as we can see them, the visuals these reconstructions provide can make ancestors real in surprising ways. They are also often controversial. Try reading up on the presumed skull of Philip of Macedon, let alone the remains of the Ancient One found in the lands of his present-day relatives, the Confederated Tribes of the Colville Nation. Or the so-called "Cheddar Man," whose DNA revealed much to many people who think they are white's annoyance that he was probably dark skinned, dark and curly haired, and blue eyed. Decisions about what hair, skin, and eye colour to attribute to ancient ancestral remains is non-trivial, and without additional evidence many reconstructors opt to select the most familiar options to them. There is an important element of artistic license in these reconstructions, and that can go awry if not applied thoughtfully. Indeed, the Cheddar Man case is a great example, because the naïve assumption outside of the lab at least was that he would be fair skinned, blonde, and blue eyed. This is the case even though it seems to me that the english are more often brown haired and brown eyed like most of the world, because they are genetically dominant. The evidence that skin colour changes rapidly in isolated populations in the right climate and food conditions expands nearly every day, so of course that impression is no help at all in such questions. The extremes of emotion that reconstructions can raise are remarkable, but probably shouldn't be surprising. A personal bugbear of mine is the insistence on reconstructing ancient peoples all over the world unless they are presumed to be white-skinned, as if they never knew or invented a comb. But the issues are not that minor. They are very much about visibility, who is allowed to be seen, and whose existence in the past and the present is acknowledged. To this day, there are people in the americas who are convinced that the Indigenous peoples here massacred a supposed predecessor "white race" consisting of lost Hebrew tribes or lost Welsh sailors. Projection is an extremely nasty habit. Other versions of this execrable myth have been forced onto anywhere people who think they are white are not in the majority. Seeing non-white people in the mainstream media not in the form of a stereotype or caricature is still all too rare, even though in north america "white" people are not the visual or practical majority. It is dangerous to play into these sorts of racist nonsense, and the current media environment doesn't help, because playing up controversy increases page views or sales of the ever dwindling hard copies of newspapers and magazines. Truth be told, the tidied versus bird's nest of hair is not minor. Hair is a key element of how we present ourselves, and to date there is no human culture out there or that we can reconstruct in the past where nobody cared a whit about their hair or that of other people. Considering our close genetic relationships to other primates that customarily groom each other as a mode of social interaction, it stands to reason we would have a persistent interest in how others present themselves. We can rationalize the interest in all manner of ways, such as a full head of clean hair indicates both physical and mental health. But hair is also used to mark social status and how we treat our hair can conform with or defy an all manner of social expectations of us based on our ethnicity, class, and sex. Africans and people of African descent deal with the racist assumption that their hair must be dirty if kept in braids, beautiful afros, or not straightened, all the time. So do Indigenous peoples of the americas, who still have to fight back against efforts to force their children, especially boys, to cut their braids. So opting to reconstruct an ancient person whose skin is presumed dark with unkempt hair is not a neutral thing to do. I can well imagine a person wondering if there is anything that isn't fraught with social and political challenges these days. The kindest answer is to point out that the rooms of the museum and the annals of archaeological reconstruction are no refuge, they are inevitably the centre of the storm. (Top) Queer Intimations (2018-07-07)

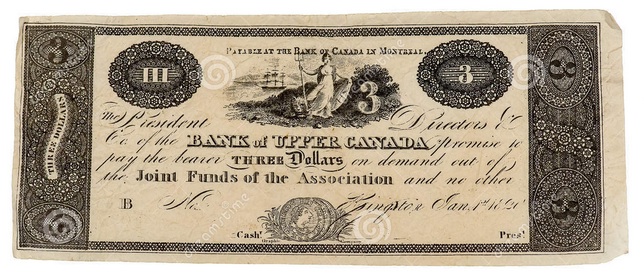

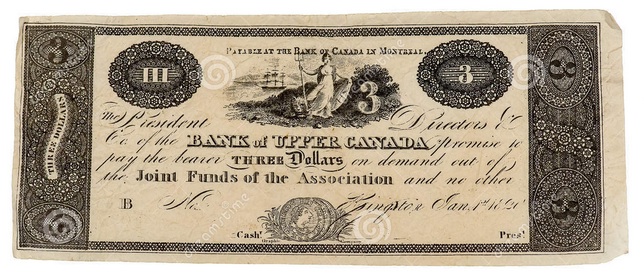

Royalty-free image of an 1820 three dollar bill issued by the bank of canada in montréal, courtesy of photographer Edonalds at dreamstime.com, june 2013.